Data Provenance Is Not Enough. Regulated Operators Need Decision Provenance.

Most operations log the action. The decision evaporates. That's the gap AI is now exposing.

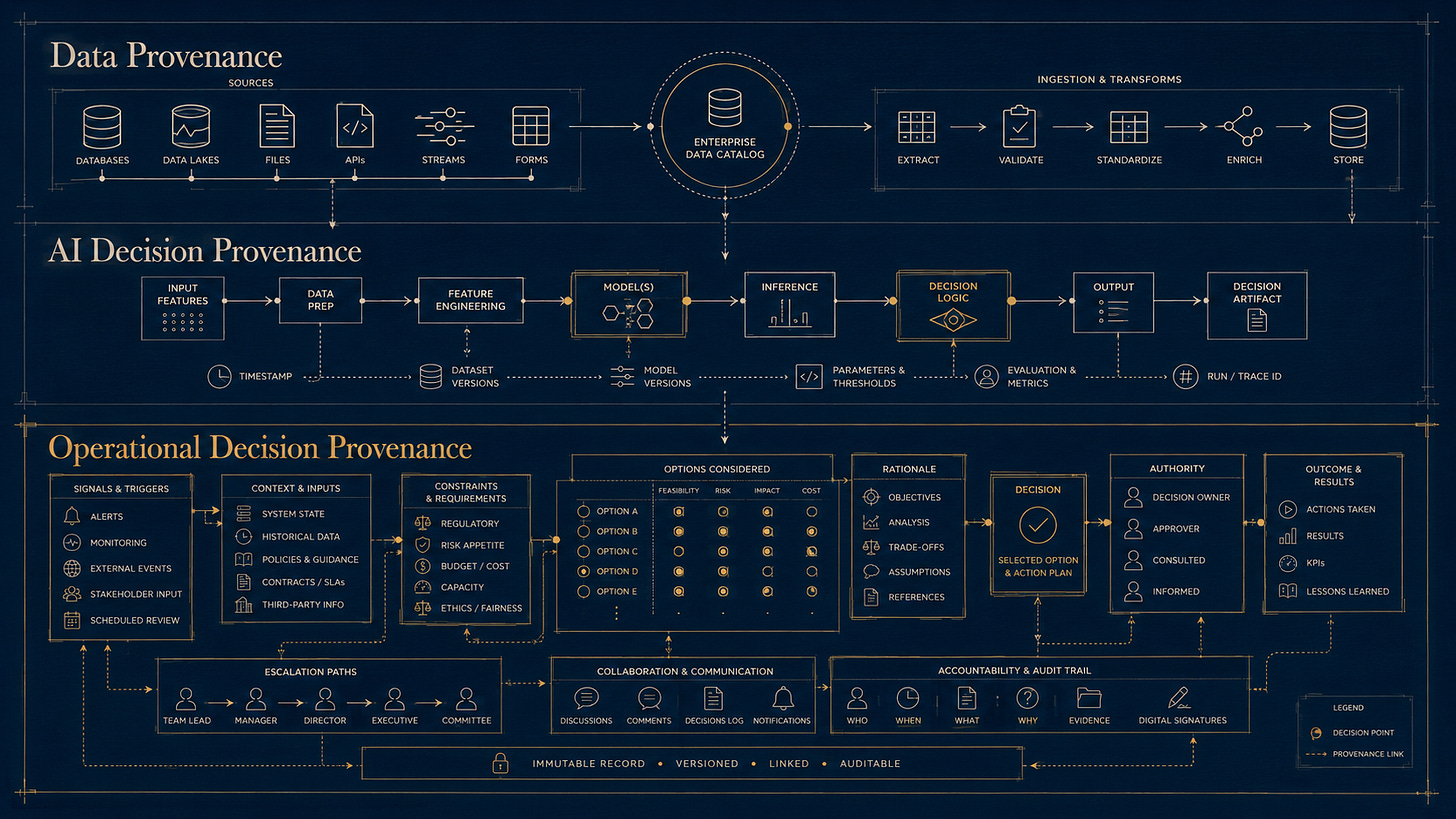

Three layers of provenance: data provenance, AI decision provenance, operational decision provenance

Most conversations about AI trust still circle around data provenance. Where did the training data come from. Who labeled it. What’s the chain of custody. Whether the model’s outputs can be traced back to inputs the operator can verify.

That’s necessary. It’s not sufficient.

The next frontier — the one operators in regulated industries haven’t named yet — is decision provenance. Specifically, provenance for the human decisions AI participates around.

The term decision provenance already exists in AI accountability research. Earlier work by Singh, Cobbe, and Norval framed decision provenance around exposing decision pipelines for accountable algorithmic systems. Newer commercial efforts apply similar concepts to AI decision records and auditability.

That work matters. But regulated operations have a harder problem.

The decision that matters in aviation, maintenance, dispatch, safety leadership, and accountable executive roles is often not made by the AI. It is made by a qualified human operating under authority, constraint, uncertainty, and time pressure. If the operation cannot reconstruct that decision, it cannot safely govern the AI participating around it.

That third tier — what I’ll call operational decision provenance for regulated human decisions — is the tier operators are not yet addressing.

Three tiers of provenance.

Data provenance answers: can I trust the inputs the AI used.

AI decision provenance answers: how did the algorithmic system produce its output, and can it be audited.

Operational decision provenance answers: how did the accountable human decision form, and can it be reconstructed.

The three are related. They are not interchangeable.

A flight is delayed because of an unresolved maintenance discrepancy. The AI assistant correctly surfaces the relevant MEL deferral conditions. The dispatcher reviews the data and recommends a routing alternate because it avoids known or forecast icing. The assigned captain reviews the mission and elects to delay because they are not comfortable accepting it under the conditions. An incoming captain scheduled for a post-trip crew swap is comfortable with the mission and has enough duty day remaining to operate it after an additional delay. The Director of Operations concurs.

Data provenance can verify that the AI used current MEL data. AI decision provenance can audit the recommendation engine that surfaced the deferral conditions. Neither captures what actually happened in the human pathway: the dispatcher’s read on weather trends, the assigned captain’s judgment and comfort level, the incoming captain’s different assessment of the same conditions, the Director’s weighing of operational pressure against safety culture, or the unspoken signals present in the room.

If those layers evaporate, the operation has logged a delay and a completion. It has not captured an articulated decision.

What operational decision provenance actually means.

A decision in regulated operations is more than its outcome. The outcome is the artifact most systems capture. The decision itself is everything that produced the outcome:

The signals the human noticed. The constraints they were operating against. The inputs they consulted. The options they considered. The rationale they chose between. The uncertainty they accepted. The time pressure they were under. The stakeholder alignment or dissent in the room. The override they took, if any. The action they finally took.

Most operations capture the last item. The action. The rest evaporates.

Operational decision provenance is the discipline of capturing the full pathway — not as a compliance checkbox, but as the structural record of how the decision was actually reached.

When that pathway is captured with provenance intact, the decision becomes something the operation has never had before: a fully articulated, reconstructible picture of how a senior human reasoned under pressure.

Three things change when decisions have provenance.

The decision becomes teachable. Most operations rely on senior people to model good decision-making for less senior people through proximity. The senior dispatcher takes the call, the junior dispatcher watches, the company hopes the pattern transfers. Without decision provenance, the only thing that transfers is the outcome. With decision provenance, the rationale and reasoning transfer too. The junior dispatcher does not have to wait years to see enough patterns. The patterns are captured.

The decision becomes trainable. AI systems learn from data. The data they currently learn from in regulated work is mostly outcomes. Outcomes by themselves are dangerous training signal. They reward what worked once, not what was good reasoning under uncertainty. Operational decision provenance gives AI systems the input-rationale-outcome triple that lets them learn what good decisions actually look like, not just what successful outcomes happened to occur. This is the difference between training an AI to imitate luck and training an AI to support reasoning.

The decision becomes referenceable. A less senior decision-maker facing a similar situation in the future can pull up the provenance record of how a senior decision-maker reasoned through a comparable case. Not the answer. The reasoning. They get to start their decision higher up the curve than they would have without that reference.

That last point is the one most operators do not yet see clearly. Operational decision provenance does not just capture knowledge. It compresses the time it takes for a less senior person to operate closer to senior level without losing decision quality.

Why this matters now.

Senior operational expertise is the scarcest and most fragile asset in regulated industries. Every senior dispatcher, captain, A&P, accountable executive, and DO is one resignation, retirement, or burnout episode away from being unrecoverable. The tacit knowledge they hold — what to notice, when to escalate, how to weigh competing pressures — does not transfer through procedures alone.

Most operations have responded to this by writing more procedures. That works at the margins. It does not transfer the judgment.

Operational decision provenance is a different mechanism. It captures the conditions around the judgment — the signals, assumptions, constraints, dissent, rationale, authority, outcomes, and feedback — without claiming to extract the judgment itself. The judgment stays with the qualified human who held the authority. The conditions around it become legible.

Less senior decision-makers operating with that legibility do not become senior overnight. They do operate closer to senior level faster than they would have without it.

That is the actual return on operational decision provenance. Not compliance. Not audit trail. Compounding institutional knowledge.

Why AI makes this urgent.

AI is pushing decision-making either toward automation or toward acceleration in nearly every regulated industry. Most operators are responding by either resisting AI or by adopting it without architecture.

Both responses are wrong for the same reason. Both treat the AI as the variable. The variable is the decision pathway. AI is one participant in that pathway. Whether the AI participates well depends on whether the pathway is articulated well enough for AI to operate inside it without compromising the human authority the regulation requires to remain accountable.

That articulation is what operational decision provenance produces. The structured record of how decisions get made — captured before AI is added, maintained as AI participation grows, and used as the training and reference substrate that lets the operation compound rather than degrade.

Without operational decision provenance, AI in regulated work is a black box bolted onto another black box. With it, AI becomes a participant in a system the operator can actually see.

Where this leaves operators.

The shift from data provenance to operational decision provenance is not optional in regulated work. It is the move that determines whether AI participation remains governable, whether human accountability stays intact, and whether the operation gets smarter over time or slowly loses the senior judgment it cannot replace.

The operators that build this discipline now will compound it for years. The ones that wait will find the AI has already been participating in decisions they cannot reconstruct.

Data provenance is about trust in inputs. Operational decision provenance is about accountability in execution.

You can only automate what you can clearly articulate. The articulation is the asset. Operational decision provenance is what makes it durable.

At SayFlight, Operational Decision Architecture is the framework I built to structure that work for regulated operators. It maps decision authority, AI participation boundaries, escalation pathways, decision logic, and evidence capture so operational judgment can be reconstructed, learned from, and governed over time.

The discipline does not happen by accident. It has to be designed.

Toby Benenson is the founder of SayFlight and the creator of Operational Decision Architecture, a provisionally patented framework for governing AI participation in regulated operations. He spent 18 years in business aviation operations, including COO roles at Desert Jet and FlyHouse. He holds an FAA Aircraft Dispatcher Certificate and a Private Pilot Certificate. Duke University graduate.

SayFlight advises operators on AI governance, operational decision architecture, and fractional COO engagements.

toby@sayflight.ai · www.sayflight.ai

Note: SayFlight has filed U.S. provisional patent applications covering Operational Decision Architecture and Operational Decision Provenance in regulated operations.

References and further reading

Singh, J., Cobbe, J., and Norval, C. (2019). “Decision Provenance: Harnessing data flow for accountable systems.” IEEE Access, 7, 6562-6574. DOI: 10.1109/ACCESS.2018.2887201

Cobbe, J., Lee, M.S.A., and Singh, J. (2021). “Reviewable Automated Decision-Making: A Framework for Accountable Algorithmic Systems.” Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency (FAccT ‘21), March 3-10, 2021. DOI: 10.1145/3442188.3445921

Tabassi, E. (2023). Artificial Intelligence Risk Management Framework (AI RMF 1.0). NIST AI 100-1. National Institute of Standards and Technology. DOI: 10.6028/NIST.AI.100-1